Welcome to the Age of Slop

AI slop is the word currently spreading around the internet like a plague. It is a relatively new but already well-known phenomenon in digital spaces. AI slop is low-quality, mass-produced digital content generated by AI tools with little to no human creativity or input involved.

It is often uninspired junk content like text, images, and video that are used to flood social media for engagement or ad revenue.

This word became so commonly used and understood that it was given 2025's word of the year, awarded by both Merriam-Webster and the Macquarie Dictionary. (Source) (Source)

But the word itself implies something worth sitting with: it creates a mess of digital spaces. And a mess implies that someone has to clean it up.

The question is who?

A problem without a custodian

It is a deceptively simple question with a complicated answer. The internet was not designed with a custodian in mind. The systems, institutions, and organisations that exist to protect the public from harmful information were built for a slower, more predictable world. AI slop does not operate by those rules.

It moves fast, it scales instantly, and it does not wait for anyone to catch up. Research shows that false news is 70% more likely to spread than true news, and viral false stories travel approximately six times faster than corrections. (Source)

The public cleaners of AI slop

The obvious first answer would be public institutions. They make the rules, and they enforce them. But the problem with them being the primary forces tackling this type of AI-induced situation is that their rhythms do not match at all — not even in the slightest. Public institutions are known for being slow and deliberate.

AI slop is known for being fast and belligerent. They are total opposites of each other, and in this specific scenario, being too slow and deliberate is causing a lot more harm than good.

When slow governance meets fast disinformation

The consequences of that mismatch are already visible. AI slop is eroding public trust in government institutions. It is either proving that governments cannot move fast enough to control things, or it can be weaponised by malicious actors to damage public trust through harmful narratives that undermine key democratic processes.

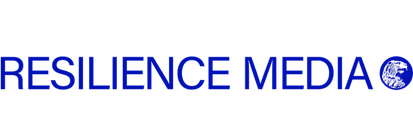

During the 2024 US election cycle, AI-generated robocalls, deepfake videos of candidates, and fabricated news articles spread across social media faster than any regulatory body could respond. (Source)

The damage to public perception was done long before any official correction arrived.

There is also the added issue of who is actually responsible for this. Nobody really knows. It is such a new phenomenon that multiple departments of government are all aiming to tackle it in different ways, with overlapping mandates and no coherent unified strategy.

The EU's high-risk AI Act provisions were delayed until 2027 following pressure from the tech industry — a stark illustration of just how far behind the regulatory curve public institutions currently sit. (Source)

The private cleaners of AI slop

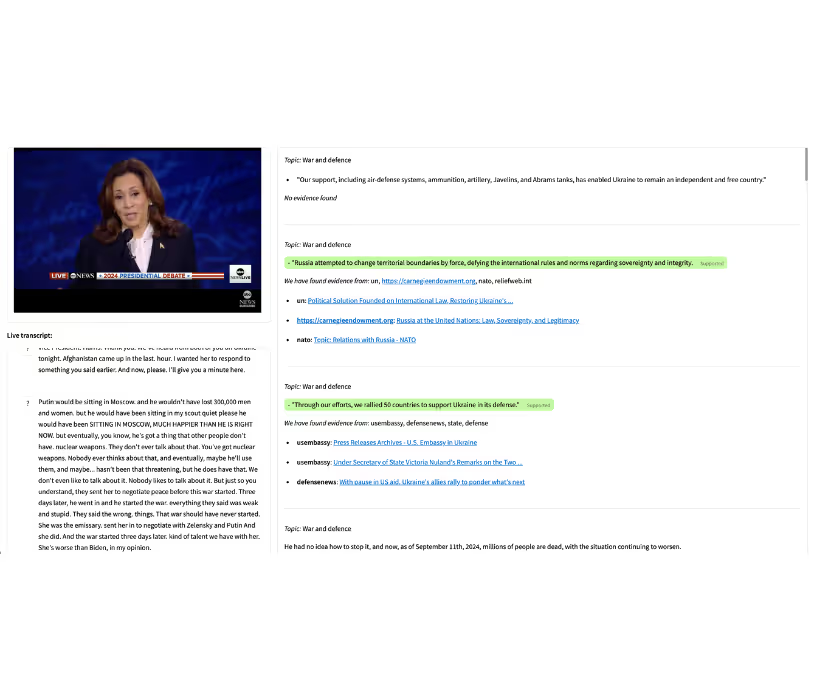

The other answer, the ones currently doing the most active work surrounding this issue, are media and journalism organisations. AI slop is permeating social media platforms like never before, and journalists have noticed. Particular narratives are almost always attached to AI slop images and text, and that is what causes journalists to spring into action.

AI-created imagery and text are one of the most commonly reported types of fact-checks circulating in newsroom databases at the moment. (Source)

It’s a capacity problem, not a willingness problem

Journalists and fact-checkers push against it depending on the scale of circulation, often conducting reverse image searches or analysing content through an AI detector. These are valid and effective methods.

But the problem is they cannot do this for everything on the internet. Media and journalism organisations have a monumental task of analysing and verifying claims and narratives from actual humans, with the limited resources they already have access to.

Adding AI slop into the mix overwhelms their already capped-out bandwidths. (Source)

It is not a failure of effort. Its is a failure of capacity against an opponent that does not tire, does not sleep, and produces content at a volume no human team can manually match.

Even as fact-checking response times have shortened significantly in recent years, studies confirm that false narratives embed themselves in public belief within minutes of going live. (Source)

Speed of response is not just important. It is essential to many of the democratic processes that we depend on.

The tools have to match the threat

Depending on either public institutions or media organisations is not going to work unless they are given the tools they need to operate at a faster pace than before. Public institutions need to have tools that allow them to operate at speeds where bureaucratic roadblocks are minimised as much as possible.

Media organisations need to have access to tools that enable them to do more with less, so they can stamp out AI slop's impact while still being able to carry out other types of journalism that matter.

Addressing those needs is absolutely essential to minimising the effect AI slop and enshittification has on our society.

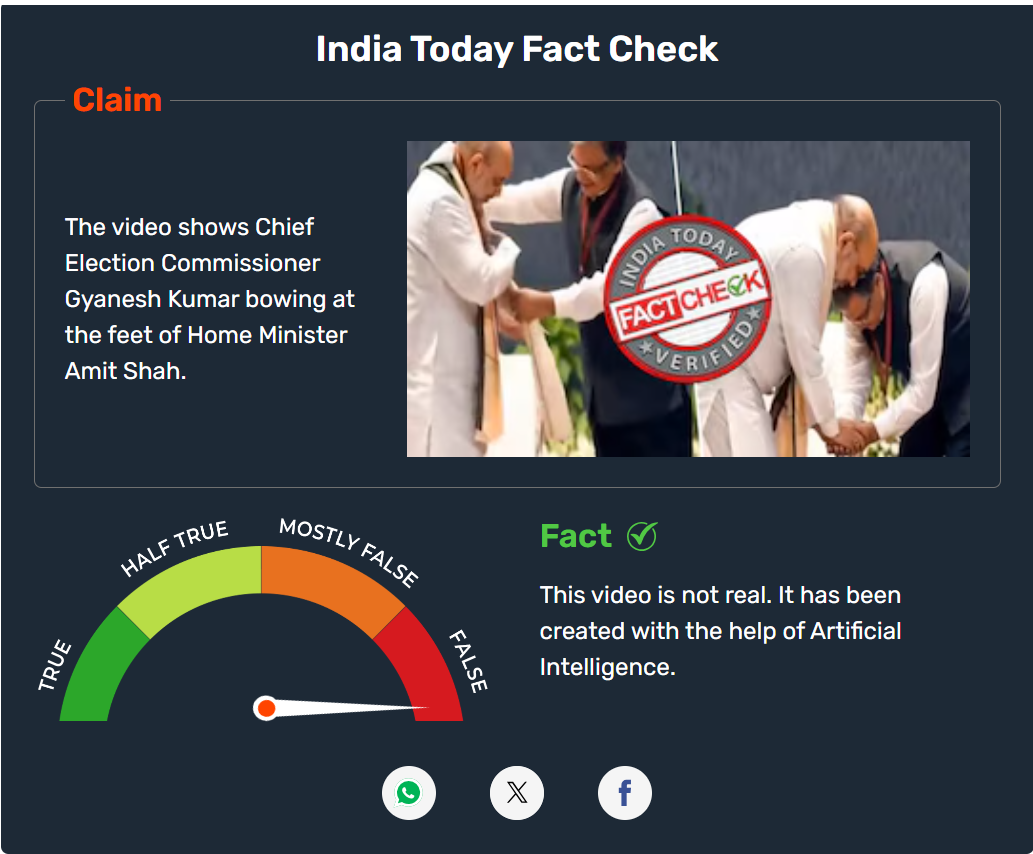

Where Gather comes in

Gather by Factiverse has the potential to do exactly that. AI slop and its narratives do not need to dominate the internet if organisations have access to a real-time media monitoring tool that lets them be notified once something is spreading too far, too fast.

Transcripts, claims, and analysis of content tied to AI slop can be effectively processed so that the human at the final checkpoint can make a decision fast — and with confidence.

By adopting the same kind of speed that AI slop uses to propagate online, organisations can effectively take back control of the conversations that AI slop has seemingly taken over.

The advantage AI slop currently holds is not sophistication, it is speed.

Close that gap, and the dynamic shifts entirely. Gather is a tool that enables control of AI slop, and in an information environment that is only going to get noisier, that kind of control is not optional. It is overdue.

You can sign up to the waitlist here: https://www.factiverse.ai/gather

Sources:

- Merriam-Webster — https://www.merriam-webster.com/wordplay/word-of-the-year

- Macquarie Dictionary — https://www.macquariedictionary.com.au/macquarie-dictionary-word-of-the-year-for-2025/

- PMC — https://pmc.ncbi.nlm.nih.gov/articles/PMC9548403/

- Brennan Center for Justice — https://www.brennancenter.org/our-work/analysis-opinion/gauging-ai-threat-free-and-fair-elections

- Reuters — https://www.reuters.com/sustainability/boards-policy-regulation/eu-delay-high-risk-ai-rules-until-2027-after-big-tech-pushback

- Full Fact — https://fullfact.org/policy/reports/full-fact-report-2025/

- Al Jazeera Media Institute — https://institute.aljazeera.net/en/ajr/article/2861

.avif)